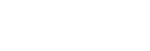

The conversation around artificial intelligence has decisively shifted. Enterprise leaders are no longer asking if they should adopt AI, but rather how they can embed it securely into their core operations. However, moving from a localized proof-of-concept to full-scale generative AI implementation is a highly complex engineering endeavor.

Without the right architecture, embedding Large Language Models (LLMs) into legacy systems can result in massive technical debt, data leaks, and operational bottlenecks. For Chief Technology Officers (CTOs) and IT leaders, partnering with a specialized generative AI integration company is the most reliable strategy to mitigate these risks and accelerate time-to-value.

If you are preparing to modernize your infrastructure, this guide breaks down the hurdles of AI adoption and how expert generative AI consulting services can help you navigate them.

-

What Are the Main Challenges in Implementing Generative AI?

When executives ask, “What are the main challenges in implementing generative AI?”, the answers rarely revolve around the AI models themselves. Modern models are exceptionally capable. The true friction lies at the intersection of AI, existing enterprise infrastructure, and data governance.

Here are the key challenges in implementing generative AI across enterprise environments:

a. Data Silos and Poor Data Readiness

An AI model is only as intelligent as the data it processes. In most enterprises, critical business data is fragmented across CRMs, ERPs, flat files, and legacy databases. If an LLM cannot access a unified, clean data pipeline, it will generate inaccurate outputs (hallucinations). To solve this, organizations frequently rely on top-rated AI data consulting services for generative AI to architect robust data lakes and implement vector databases (like Qdrant or Pinecone) that feed contextual truth to the AI.

b. Security, Privacy, and Compliance

Feeding proprietary source code, financial records, or customer data into a public API is a severe security violation. Achieving compliant generative AI integration requires establishing strict tenant isolation, utilizing secure API gateways (like Azure OpenAI), or deploying self-hosted open-source models (such as Llama 3) within a virtual private cloud (VPC) to ensure zero data retention by third parties.

c. Legacy System Compatibility

Many core business systems lack the modern RESTful APIs or GraphQL endpoints necessary to communicate with AI agents. Bridging the gap between a cutting-edge LLM and a 15-year-old monolithic application requires advanced middleware engineering and custom connector development.

-

Industry Deep-Dive: Overcoming Vertical-Specific Integration Hurdles

The complexities of generative AI integration services multiply when dealing with highly regulated or deeply specialized industry software.

Take the automotive retail sector as a prime example. Dealerships run on massive, complex Dealership Management Systems (DMS) that handle everything from inventory and financing to service scheduling. Applying the best practices integrating generative AI with dealership DMs requires a surgical approach.

Instead of a complete system overhaul, engineers must build AI middleware that securely queries the DMS database in real-time. This allows for the creation of proactive AI agents that can automatically draft personalized lease-renewal emails to customers, or predict service bay bottlenecks, all without disrupting the legacy DMS architecture.

According to global technology standards outlined by organizations like IBM on AI Governance, tailoring AI integration to specific industry workflows while maintaining strict data guardrails is the defining factor of long-term AI success.

-

The Value of Comprehensive Generative AI Consulting & Development Services

Attempting to build an enterprise AI architecture with an in-house team that lacks deep Machine Learning Operations (MLOps) experience often leads to delayed launches and bloated budgets.

Engaging a dedicated agency that provides end-to-end generative AI consulting & development services ensures that your system is built on a foundation of scalability. A true engineering partner does not just connect an API; they architect the entire lifecycle:

- Strategic Roadmapping: Identifying high-ROI use cases and auditing your current data infrastructure.

- Custom Middleware Development: Building the necessary bridges between your secure data and the LLM via Retrieval-Augmented Generation (RAG).

- Human-in-the-Loop (HITL) Guardrails: Designing workflows where AI performs the heavy lifting, but human experts retain final approval authority, drastically reducing enterprise risk.

-

Transform Your Operations with Innotech

The window for early adoption is closing. To secure a sustainable competitive advantage, your organization must transition from isolated AI experiments to deeply integrated, AI-native workflows.

At Innotech, we specialize in solving the most complex integration challenges. As a premier partner for enterprise modernization, our teams engineer bespoke, highly secure AI architectures that plug directly into your existing operational fabric—ensuring strict data privacy and immediate operational efficiency.

Ready to move from AI theory to enterprise reality? Discover how our Custom Generative AI Development Services can seamlessly integrate intelligent automation into your most critical business systems. Schedule a technical consultation with our lead architects today.