LLM Data Privacy in the US: Navigating SOC2, HIPAA, and Enterprise AI Compliance

For Chief Technology Officers (CTOs) and tech leaders in the United States, the mandate is clear: integrate Generative AI to stay competitive, but do not compromise data security. As enterprises rush to adopt Large Language Models (LLMs), they hit a massive regulatory wall. Feeding proprietary source code, financial records, or Protected Health Information (PHI) into public AI models is a direct violation of frameworks like SOC2 and HIPAA.

When creating a custom LLM, understanding data privacy is step one. This article explores the architectural strategies and engineering methodologies required to build enterprise-grade, compliant AI systems.

-

The Enterprise AI Compliance Landscape

Standard, out-of-the-box LLMs are designed for general utility, not enterprise governance. In the US market, two compliance frameworks dictate how AI must be architected:

- SOC2 (Service Organization Control 2): Focuses on security, availability, processing integrity, confidentiality, and privacy. An AI system must prove that customer data is isolated, encrypted, and not used to train global models.

- HIPAA (Health Insurance Portability and Accountability Act): For healthcare and InsurTech, any system touching PHI must have strict access controls, audit logs, and a Business Associate Agreement (BAA) with the LLM provider.

To meet these strict requirements, organizations cannot rely on consumer-grade AI. They need a purpose-built AI architecture.

-

Architecture Strategies for LLM Data Privacy

At Innotech, our consulting approach does not force a one-size-fits-all solution. Depending on the data sensitivity and compliance needs of our US clients, we implement one of two primary LLM deployment strategies:

Strategy A: Secured API Tier with Enterprise Governance

For companies that need the cognitive power of state-of-the-art models (like GPT-4 or Claude 3) but require strict compliance, we design architectures around Secured API Tiers.

- Zero Data Retention: We leverage enterprise agreements where API providers explicitly commit that customer prompts and generated responses are never used to train their foundational models.

- Cloud-Native Auditing: By routing AI traffic through enterprise environments like Google Cloud (Vertex AI) or Microsoft Azure OpenAI, we enforce Data Processing Agreements (DPA). This ensures data logs are kept only for security/fraud detection within a specified window and are governed by existing SOC2 and HIPAA guardrails.

Strategy B: Self-Hosted / On-Premise LLMs (Absolute Privacy)

For defense contractors, deep-tech finance, or healthcare systems where data cannot leave the corporate firewall under any circumstances, we deploy Self-Hosted AI architectures.

- Data Sovereignty: We fine-tune and deploy powerful open-source models (such as Llama 3, DeepSeek, or CodeLlama) directly onto dedicated GPU clusters (A100/H100) within the client’s private cloud (AWS VPC) or physical on-premise servers.

- The Trade-off: While self-hosting requires higher upfront compute investments, it offers absolute data privacy. Zero bytes of data ever leave your controlled environment, making SOC2 and HIPAA compliance significantly easier to audit.

-

Engineering Data Privacy: The Innotech Methodology

Choosing the right deployment model is only half the battle. How the application handles data at the application layer is what determines true compliance. Our engineering teams implement a “Defense in Depth” methodology for LLM integration:

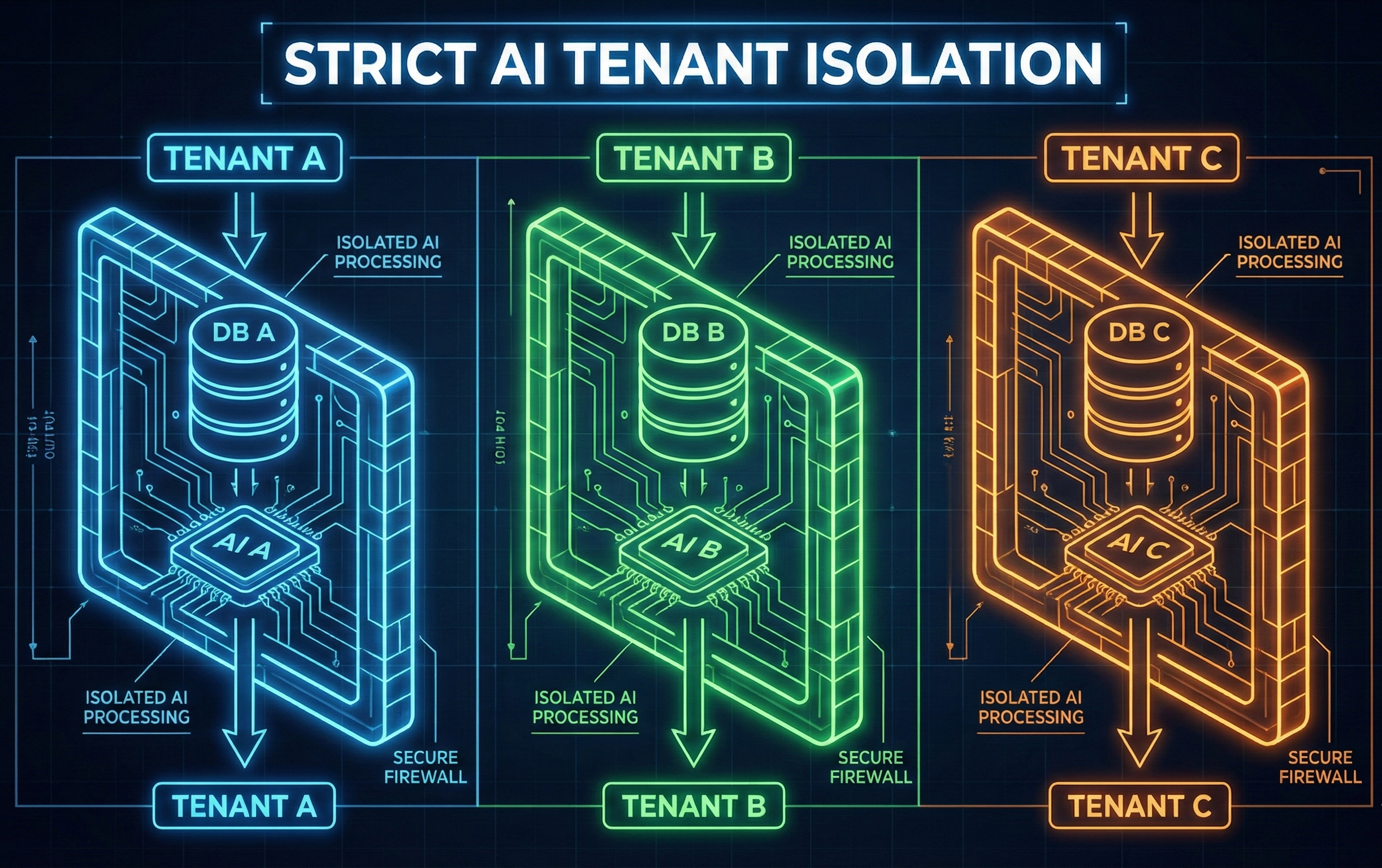

Strict Tenant Isolation

Multitenancy is a massive risk in AI. If an LLM has access to a shared database, it might accidentally leak Client A’s data to Client B. We prevent this by provisioning isolated databases per tenant (utilizing separate Postgres schemas and dedicated Qdrant vector databases for RAG). There is zero data bleeding across tenant boundaries.

Complete Data Lifecycle Control

Under regulations like CCPA or GDPR, users have the “Right to be Forgotten.” Our AI architectures are built with complete deletion protocols. If a user or enterprise deletes an account, the entire data footprint—including cached code, requirement specs, RAG memory, and vector embeddings—is permanently wiped, not just soft-deleted.

Tool/Function Registries and Guardrails

To prevent AI from executing unauthorized actions or hallucinating data access, we route all AI actions through a strict Tool/Function Registry. The AI is only permitted to call pre-registered, secure internal APIs using structured JSON schemas. If an LLM attempts an unauthorized data query, the Dependency Guard instantly blocks the action, preventing data exfiltration.

Conclusion: Bridging the Gap Between AI and Compliance

Adopting AI in a heavily regulated US market does not mean you have to sacrifice innovation. By choosing the right deployment environment—whether a secured enterprise API or a fully air-gapped, self-hosted open-source model—and enforcing strict architectural guardrails, CTOs can deploy LLMs that are both powerful and fully compliant with SOC2 and HIPAA.

Ready to integrate AI into your enterprise workflows without compromising security? Book a free AI Infrastructure Consultation with our engineering experts today to evaluate your data readiness and map out a compliant custom LLM architecture.